Storage Migration Activity:

Scenario: Storage Migration from EMC Clarion { Old Storage } to EMC V-Max { New Storage }.

We have asked storage team to allocate 4 new storage LUN's as per the below mentioned size. • 2 Lun’s of 5 GB for data base redo logs • 2 Lun’s of 200 GB for database

Scan for the newly added Storage, and get the consolidated device created for the different paths coming from the storage.

Before this you check the current devices on the server by running:

[root@xxxxvm1 ~]#powermt display dev=all or multipath -ll (if power path is configured you will be having detailed output for all devices like emcpowera, emcpowerb or with dmmultipath you will be having detailed output for all devices like mpath15, mpath16 and so on.) [root@xxxxvm1 ~]#echo 1 > /sys/class/fc_host/host3/issue_lip [root@xxxxvm1 ~]#echo 1 > /sys/class/fc_host/host4/issue_lip [root@xxxxvm1 ~]#echo “- - -“ > /sys/class/scsi_host/host3/scan [root@xxxxvm1 ~]#echo “- - -“ > /sys/class/scsi_host/host4/scan

[root@xxxxvm1 ~]#powermt config After this you can check the new device created by running: [root@xxxxvm1 ~]#powermt display dev=all (here it will show all previous devices as well as the newly created device emcpowerX, where X=a,b,c…. the next character available)

Example:–

Say from the previous step you may have discover a newly added device to the server is: emcpowerf

Check the Device as: --------------------------------------------------------------------- state=alive; policy=SymmOpt; priority=0; queued-IOs=0 ---------------- Host --------------- - Stor - -- I/O Path - -- Stats --- HW Path I/O Paths Interf. Mode State Q-IOs Errors ----------------------------------------------------------------------- 3 qla2xxx sdo FA 8cB active alive 0 0 4 qla2xxx sdp FA 9cB active alive 0 0 [root@xxxxvm1 ~]# Create physical volume on top of this new device: root@xxxxvm1 ~]#pvcreate /dev/emcpowerf Next add this PV to the existing volume group: root@xxxxvm1 ~]#vgextend vgora /dev/emcpowerf

Now the new device will be part of the existing volume group.

Here we have two different approach to achieve the same storage migration

- Using the safe & simple cp commands & in order to do that we need to create same disk layout before we start cp.

- Either we can use lvm 2 functionality of pvmove & in order to do the we need to have same size or greater size new luns.

Example 1: Explaining first Approach using copy command:

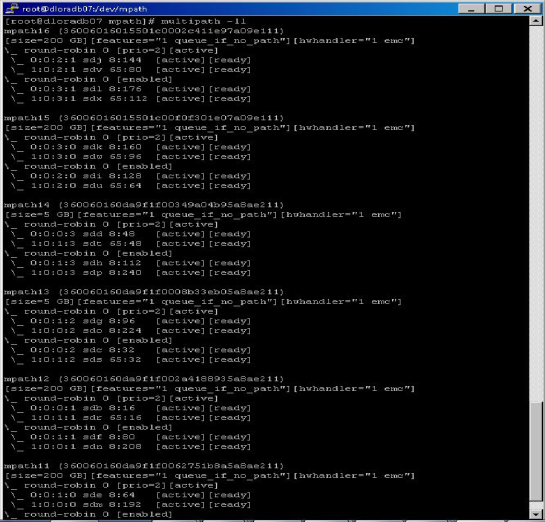

In the below mentioned screenshot you will see the above mentioned Lun’s which we have asked for.

Lun Details:-

Mpath11 & 12 of 200GB each

Mpath 9 & 10 of 5GB each

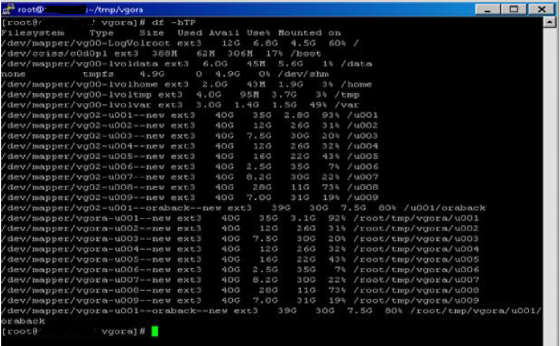

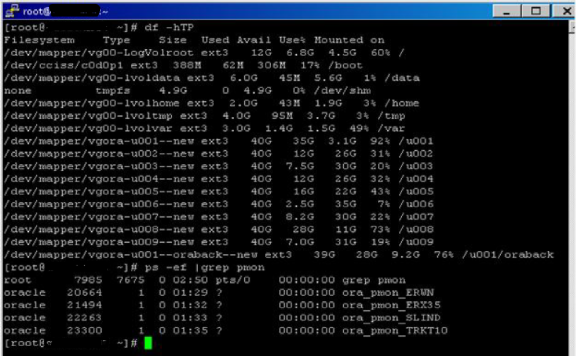

Creating New Volume Layout for data migration :-

Mounting New Volume’s into /tmp directory

Command use to migrate data:-

# nohup cp -r --preserve=mode,ownership,timestamps /u001/. /root/tmp/vgora/u001 & # nohup cp -r --preserve=mode,ownership,timestamps /u002/. /root/tmp/vgora/u002 &

Finally Data has been migrated successfully & now database is running without reporting any issue.

Example 2: Explaining Second Approach using lvm – pvmove command:

Prepare the disk

First, you need to pvcreate the new disk to make it available to LVM. In this recipe we show that you don’t need to partition a disk to be able to use it.

# pvcreate /dev/emcpowerh /dev/emcpoweri /dev/emcpowerj /dev/emcpowerk pvcreate -- physical volume "/dev/emcpowerh" successfully created pvcreate -- physical volume "/dev/emcpoweri" successfully created pvcreate -- physical volume "/dev/emcpowerj" successfully created pvcreate -- physical volume "/dev/emcpowerk" successfully created

Add it to the volume group

As developers use a lot of disk space this is a good volume group to add it into.

# vgextend vgora /dev/emcpowerh /dev/emcpoweri /dev/emcpowerj /dev/emcpowerk vgextend -- INFO: maximum logical volume size is 555.99 Gigabyte vgextend -- doing automatic backup of volume group "vgora" vgextend -- volume group "vgora" successfully extended

Move the data

Next we move the data from the old disk onto the new one. Note that it is not necessary to unmount the file system before doing this. Although it is highly recommended that you do a full backup before attempting this operation in case of a power outage or some other problem that may interrupt it. The pvmove command can take a considerable amount of time to complete and it also exacts a performance hit on the two volumes so, although it isn’t necessary, it is advisable to do this when the volumes are not too busy.

# pvmove /dev/emcpowera /dev/emcpowerh pvmove -- moving physical extents in active volume group "vgora" pvmove -- WARNING: moving of active logical volumes may cause data loss! pvmove -- do you want to continue? [y/n] y pvmove -- 249 extents of physical volume "/dev/emcpowera" successfully moved

Remove the unused disk

We can now remove the old dm-multipath device from the volume group.

# vgreduce vgora /dev/emcpowera vgreduce -- doing automatic backup of volume group "vgora" vgreduce -- volume group "vgora" successfully reduced by physical volume: vgreduce -- /dev/emcpowera

Finally Data has been migrated successfully & now database is running without reporting any issue.

Hi Amit,

Nice article buddy. Keep going.

BTW, I would like to bring your notice on couple of points in this article. 1st: dm-* devices should not be used to create LVM devices or filesystems (it is not recommended by REDHAT).

2nd: Please use “.” instead of “*” in below cp command, as the hidden files will not be copied (skipped) if you use “*” with cp.

nohup cp -r –preserve=mode,ownership,timestamps /u001/* /root/tmp/vgora/u001 &

Thanks again for sharing such valueable info 🙂

LikeLiked by 1 person

Requested changes has been done & thanks Arunabh for suggestion & spending time..:)

LikeLike

Nice Article Amit, Even i did frequently, so appreciate …

LikeLike

Perfect…!!

LikeLike